If users don’t seem to really care about their digital privacy enough to do anything different – why should the industry care so much?

When I worked at large security companies like Bell Labs and CA, we did study after study which revealed deep cognitive dissonance in terms of what people said was important to them and what they did online.

If we asked 100 people if they cared about online security, 100 people resoundingly said “YES.” Ask those same 100 people if they would do anything different online because of security concerns, they chanted in unison a universal, “NO.” This cognitive dissonant dynamic puzzled me for years.

Flash forward 10 years and we see this same dynamic with user privacy. Everyone believes user privacy is important but users don’t seem to care enough to change their behaviors. They aren’t moving to “privacy first” browsers en mass. They aren’t limiting their usage on platforms like Facebook that overtly abuse users’ privacy.

Why do people think one way but act differently?

If users don’t really care about their digital privacy enough to do anything different – why should the industry care so much?

This good question needs to be answered in the context of what the Internet was designed to do and what it was not designed to do.

Internet was built to be a content serving engine requiring little more than the ability to discover, read, contribute and distribute content. Within this model, in the early years, company sites were little more than digital collateral content hubs. There was no user tracking, personalization and not many user-initiated actions.

Once eCommerce came online, some user level security was required and the SSL was born. Yet consumers had been acclimated to a web where they simply interacted with the “experience” the web site had ordained. They had little say in how their interaction went.

The next big leap in Internet was the emergence of digital advertising everywhere – on social media, on publisher sites and long tail blogs with virtually an infinite amount of content.

Digital advertising became the killer application of the Internet so now the industry needed new tools for targeting and optimizing user experience. These new tools perpetuated the one-way conversation – users were passive recipients to whatever web sites choose to do with them and to them.

This dynamic explains our cognitive dissonance we saw in security concerns years ago and what we see today with user privacy. Users do care about these issues but all they have is a binary choice between two bad alternatives – don’t use the internet at all or total capitulation to the whims of site owners – publishers and brand sites – since there is not much they can do about it anyway. Understandably, people simply surrendered any proactive management of anything that happens to them on the Internet.

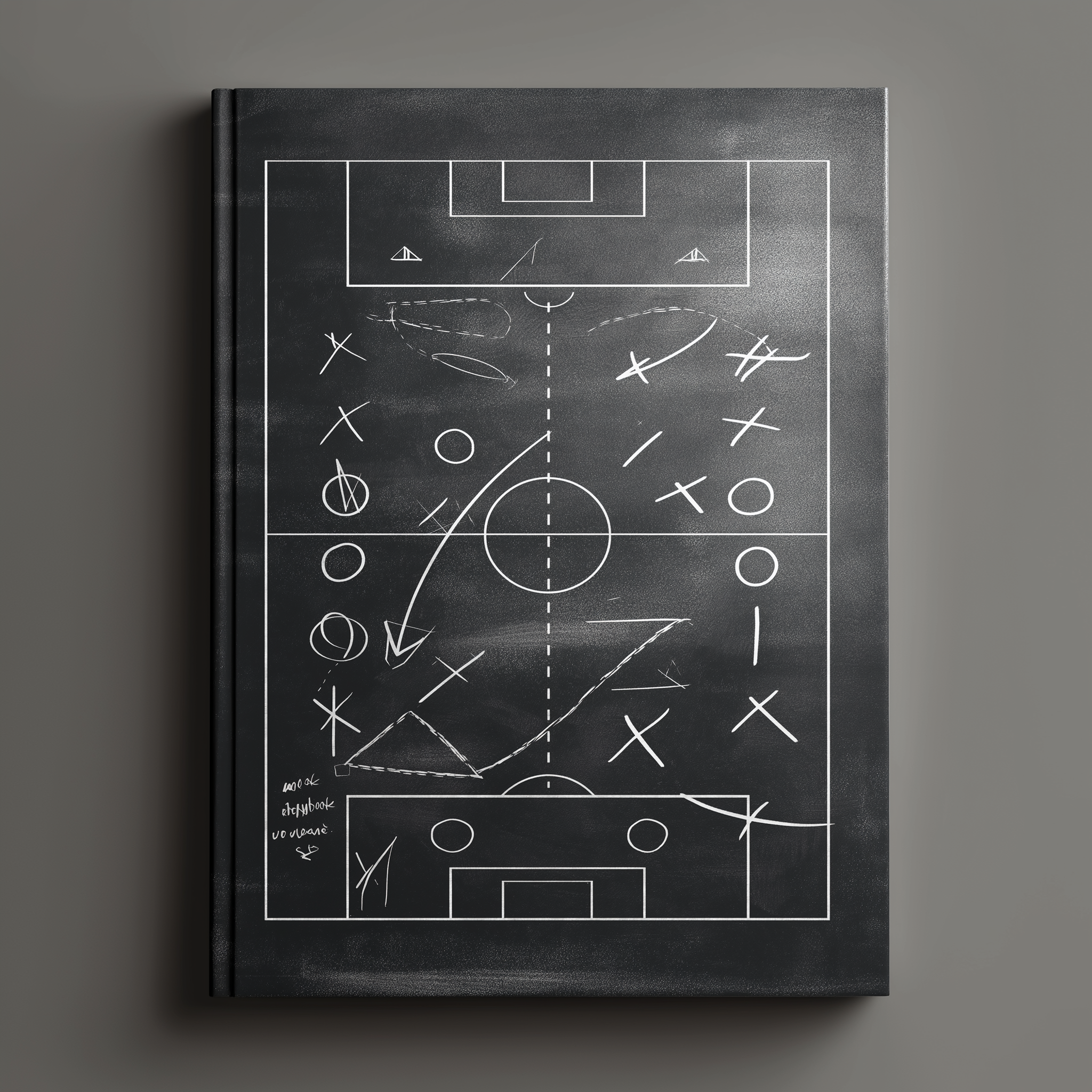

In this context, we see the battle for user privacy is not about privacy at all. It is about control.

Adtech firms, Facebook and Google have no incentive to do the right “privacy” thing because if real opt-in mechanisms take hold and consumers have control over their digital world, adtech has a lot to lose financially.

This explains why adtech firms are fighting the privacy wave with every breathe as policy makers and governments try to wrest control of user experiences and data back to users.

For brands, this situation is untenable. They know they have significant risks if they violate the rules of the privacy road. Yet the evolution to a true “privacy first” adtech model is paved with confusion and chaos, shining a spotlight on the irreconcilable tension between Users’ right to control their own data and AdTech’s presumed right to control all that data.

It’s not clear who is writing the rules that matter. It’s not easy to deploy a sane and sustainable set of practices and guidelines that can be executed in today’s Wild West of cookie data and tracking everyone online. (Here is a recent post explaining the challenges of privacy-first standards called: “Who’s writing the data privacy rules anyway?” — https://trustwebtimes.com/whos-writing-the-data-rules-anyway/.)

Sadly, though, it’s now obvious to everyone that user privacy could be the black swan event that significantly undermines the monetization model of “scalable reach” despite adtech’s outward assurances that they can be re-engineered to be “privacy first.”

The Privacy reality hits adtech hard.

According to AdExchanger, true user “opt-in” rates are “ugly,” with barely 13% of users choosing to opt-in. These low rates have a direct impact on adtech revenue because without large numbers of users adtech can track – “poof” – there goes the “scale” reach that adtech rely on to make a lot of money.

As the industry tries to adapt to all the “new” privacy initiatives with their respective approaches, from the next generation Universal IDs projects to Google’s new privacy schema, they are shaping up to be a mere veneer of a privacy practice. Under the hood, adtech platforms are swapping out cookies for new tracking mechanisms, like emails, to maintain their “scalable” tracking practices. Google, the great privacy pretender, will use cohort segmentation that can be reverse engineered to track people by assuming that a user who is logged into any Google property, (i.e. – email), agrees to be tracked for everywhere they go.

How is that any form of a true “opt-in?” Spoiler alert: It’s not, (here is a detailed explanation: https://trustwebtimes.com/why-i-object-to-adtechs-mega-id-initiatives-and-you-should-too/).

The privacy debate, like many others in adtech, all revolve around a few basic concepts of control – of data, of user experience and how adtech makes oodles of money willfully ignoring the reality that users don’t want to be tracked without their consent.

Striving to achieve the highest levels of user privacy works directly against adtech monetization model. As always, if you want to understand something – just follow the money.